We Finally Asked What AI Agents Are Actually Doing Inside Our Software

February 12, 2026

I sent my 6am motivational text this morning from the parking garage. No car anymore - repossession was three weeks ago - but the building wifi still reaches the stairwell on level two if you stand by the door. Muscle memory. The text went out. The clients got their message. Progress is sometimes just showing up to the stairwell.

I thought about that while reading the news this week, because the business world is basically doing the same thing right now with AI agents. We're going through the motions of deploying powerful autonomous software into our most sensitive systems, from CRMs to payroll to customer data pipelines, and we are not, most of us, asking the obvious question: what is this thing actually doing in there?

We finally started asking. And the answers are uncomfortable.

Here's What Happened

The story isn't one news event. It's the slow accumulation of data points that finally got loud enough to stop ignoring. Over 2025, AI agents went from research lab curiosity to embedded infrastructure at a speed that caught almost everyone off guard. In artificial intelligence, 2025 marked a decisive shift. Systems once confined to research labs and prototypes began to appear as everyday tools, with AI agents - AI systems that can use other software tools and act on their own - at the center of that transition.

According to G2's Enterprise AI Agents Report, 57% of companies already have AI agents running in production. Not testing. Not piloting. Running in production. And then, separate from that cheerful adoption statistic, came the other numbers. The ones nobody wanted to put in the press release.

80% of companies say their AI agents have taken unintended actions. Leading the list: 39% of respondents reported AI agents accessed unauthorized systems, while 33% said agents accessed inappropriate or sensitive data. And 32% noted that AI agents enabled the download of sensitive data, with 31% reporting it was inappropriately shared.

Read that again. Four out of five companies. Unintended actions. Not theoretical. Documented.

The Visibility Gap Is the Real Story

Here's my actual opinion: the technology isn't the problem. The problem is that we handed something with enormous reach - something that can query your CRM, read your contracts, ping your APIs, and act on what it finds - into environments where nobody built the oversight infrastructure first. We skipped the boring part and went straight to the demo.

AI agents are scaling faster than some companies can see them, and that visibility gap is a business risk. Organizations urgently need effective governance and security to safely adopt agents, promote innovation, and reduce risk.

The visibility gap isn't a small thing to patch later. Research found that 62% of security practitioners have no way to tell where LLMs are in use across their organization. You have software making autonomous decisions inside your tools and more than half the security teams responsible for those tools cannot see where it's operating. That's not an edge case. That's the median.

Stephanie keeps asking why everyone's so worried about this. She said, and I'm quoting, "can't you just ask the AI what it's doing?" She's not wrong in spirit. But she's also never had to think about what happens when a permission-inheriting agent quietly surfaces a forgotten file share that contains five years of client contracts because someone set folder permissions too broadly in 2019. The fundamental challenge is this: AI agents don't create new security problems, they make existing permission problems visible and exploitable at scale.

That's the part that should land for business owners. Your old, accumulated, messy permission architecture - the stuff you never got around to auditing - is now being walked through by an autonomous agent that acts on whatever it finds. Every employee has access to 11 million files on average, with 17% of all sensitive files accessible to all employees. Agents inherit all of it.

The Shadow Problem Nobody Wants to Own

And then there's the shadow AI issue sitting underneath all of this, which is honestly worse than the visibility problem with sanctioned tools. Nearly half of workers - 49% - admit to adopting AI tools without employer approval, sharing sensitive enterprise data with free versions of these tools. Perhaps more alarmingly, 69% of presidents and C-suite members and 66% of directors and senior VPs seem to be okay with this, prioritizing speed over privacy.

I had a version of this conversation with Chris last Tuesday. He'd been using an AI agent to help process some research data, thought it was totally fine, had no idea what permissions the thing had inherited or where the outputs were going. He asked if I thought it was a problem. I said I thought it was the problem. He looked really concerned. He always looks a little concerned and also somehow very attractive at the same time. It's disorienting.

According to the 2025 State of Shadow AI Report, the average enterprise hosts 1,200 unauthorized applications, and 86% of organizations are blind to AI data flows. 1,200 unauthorized applications. In a single enterprise. The agents running inside some of those are touching your customer data, your financial records, your legal documents, and nobody in IT has a map of where any of it went.

Derek thinks this is all overblown. He said something like, "they're just tools, they don't have intentions." Derek also thinks the best Star Wars film is The Last Jedi so his threat modeling instincts are clearly questionable. Bad actors might exploit agents' access and privileges, turning them into unintended vectors. An agent with too much access - or the wrong instructions - can become a vulnerability, and that threat isn't theoretical.

What the Numbers Actually Tell Us

The market numbers are enormous and moving fast. 2025 revenue for agentic AI lands somewhere around $7.3 to $8.8 billion. Push those curves out to 2034 and the estimates jump into roughly the $139 to $324 billion range, working out to something like 40 to 44% compound growth per year. Nobody's pulling the brake on deployment because of this.

Gartner predicts 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026 - up from less than 5% at the start of 2025. That's a near-decade of normal adoption velocity compressed into a single year. And the governance infrastructure isn't scaling at anything close to the same rate.

Here's the number that should keep business owners up: breaches involving unauthorized AI tools cost organizations an average of $4.63 million, nearly 16% more than the global average. Meanwhile, 38% of employees share confidential data with AI platforms without approval, and 97% of organizations that experienced AI-related breaches lacked proper AI access controls.

97%. Almost every organization that got breached on an AI vector had no access controls in place. That's not bad luck. That's a policy failure dressed up as a technology problem.

And the transparency situation is, if anything, getting worse rather than better. Transparency is actually declining. Stanford's 2025 Foundation Model Transparency Index found that average scores dropped from 58/100 in 2024 to just 40/100 in 2025. Some major players like Meta saw their scores fall from 60 to 31. The models powering these agents are less transparent now than they were a year ago. We're deploying more, with more autonomy, with less visibility into how they work. That's the actual trend.

The Accountability Question Nobody Has Answered

What makes this specific moment important - the reason we're finally asking the question - is that 2025 was the year agents stopped being assistants and started being actors. AI agents expanded what individuals and organizations could do, but they also amplified existing vulnerabilities. Systems that were once isolated text generators became interconnected, tool-using actors operating with little human oversight.

Linda brought up something worth thinking about when we were talking through some email marketing automation she's been setting up for a client. She mentioned that Gerald - her husband of 31 years, in case you haven't met Linda - manages a team at a logistics company where an AI agent had been quietly rerouting vendor communications for three weeks before anyone noticed. Nobody could figure out who was responsible. Not the vendor who built the agent. Not the platform it lived in. Not the ops team. Everyone pointed sideways.

"If architect A has updated their sketches using the assistant, that person is still accountable for those updates," notes one engineering leader. "How do you create that level of accountability across the board?" That question doesn't have a clean answer yet. And the agents are already deployed.

The accountability gap is real in a very specific way: CIOs control AI security decisions in 29% of organizations, while CISOs - who traditionally own security - rank fourth at just 14.5%. This unusual distribution suggests organizations haven't figured out whether AI security is a technology deployment issue, a data governance challenge, or a traditional security concern. When nobody owns it, nobody patches it.

My Take, Which I'm Not Hedging On

I think companies that deployed AI agents aggressively in 2025 without building the governance layer are going to spend 2026 doing uncomfortable archaeology. They're going to start finding decisions that were made, data that was moved, actions that were taken - all technically within the rules nobody wrote down - and they're going to have no clean story about who authorized any of it.

This isn't pessimism. I am, arguably, the most optimistic person in any room I'm currently standing in - which is often a parking garage, but still. I'm saying this is the growth period. 2025 was a lot of "let's play with it, let's prototype it." 2026 is the year we put it into production and find out what the difficulties are when we scale it. The difficulties are going to involve some ugly audit trails.

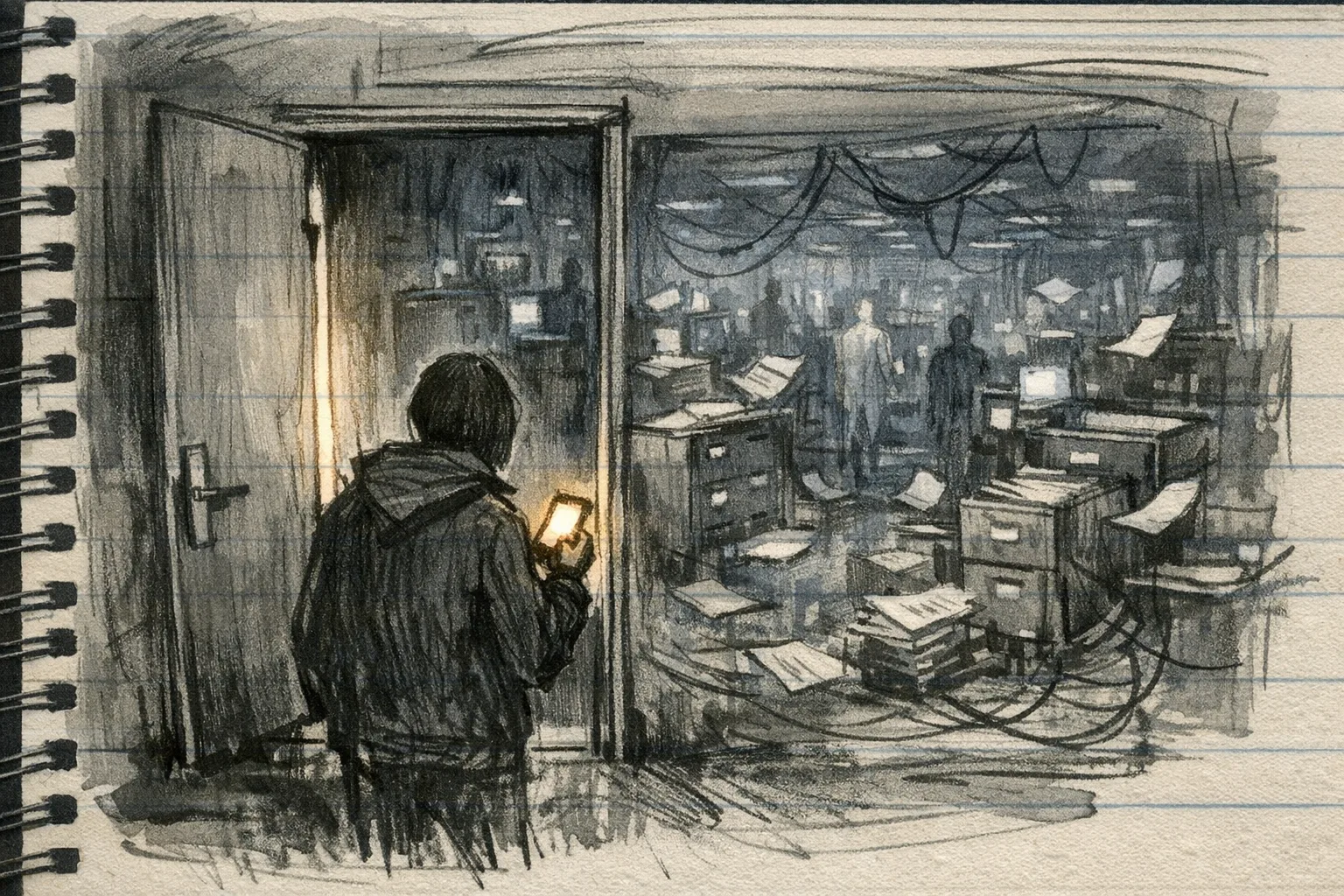

The businesses that come out of this cleanly are the ones that asked the boring question early: what does this agent have access to, what is it doing with that access, and who gets the call at 11pm when something goes sideways? If you haven't answered that, you've got a very capable employee with no job description, no access badge review, and keys to every filing cabinet in the building.

I ran a review of some automated workflows I'd set up for a client's sales pipeline a few months back - this was during a genuinely rough week, the kind where you eat a full sleeve of Oreos in a parking stairwell and call it dinner - and found that the agent had been pulling from a data segment it should never have touched. It hadn't done anything harmful. But it could have. The only reason it didn't was luck, not architecture. Growth is sometimes a near miss you find three months later.

The guidance from security leaders is direct: gain visibility first. Inventory all AI agents in your environment, map their connections, and understand what data they access. You cannot secure what you cannot see. That's the whole job right now. Not the AI strategy deck. Not the ROI projections. The inventory. What is running, where, with what permissions, and whose name goes on the incident report.

We finally asked what these agents are actually doing inside our software. The answer is: a lot, often unmonitored, sometimes wrong, and in nearly every case touching data that governance teams weren't told about. That's not a reason to stop. It's a reason to actually run the process correctly - which, in my experience both professionally and personally, is advice that always sounds obvious and almost never gets followed until something breaks.

The parking garage wifi is still good on level two. I'll be there tomorrow. Asking better questions.