The White-Collar AI Panic Hit Our Office. Half the Team Is Fine, Half Is Not.

February 14, 2026

I got here at 7:30 this morning. My dad was already at his desk. I don't know how he knows. I never know how he knows.

I made coffee and sat down and opened the news and there it was again - another round of headlines about AI eating white-collar jobs. Anthropic CEO Dario Amodei. Ford CEO Jim Farley. Microsoft's AI chief Mustafa Suleyman. All of them saying, in various registers of urgency, that the professional job market is about to break in ways that haven't been seen before. We've been stress-testing our own operations for a while now, so none of this is entirely new territory for us. But something shifted in the last few weeks. The headlines stopped feeling abstract. I watched them land on people I work with - some of them visibly, some quietly - and I started paying attention to who flinched and who didn't.

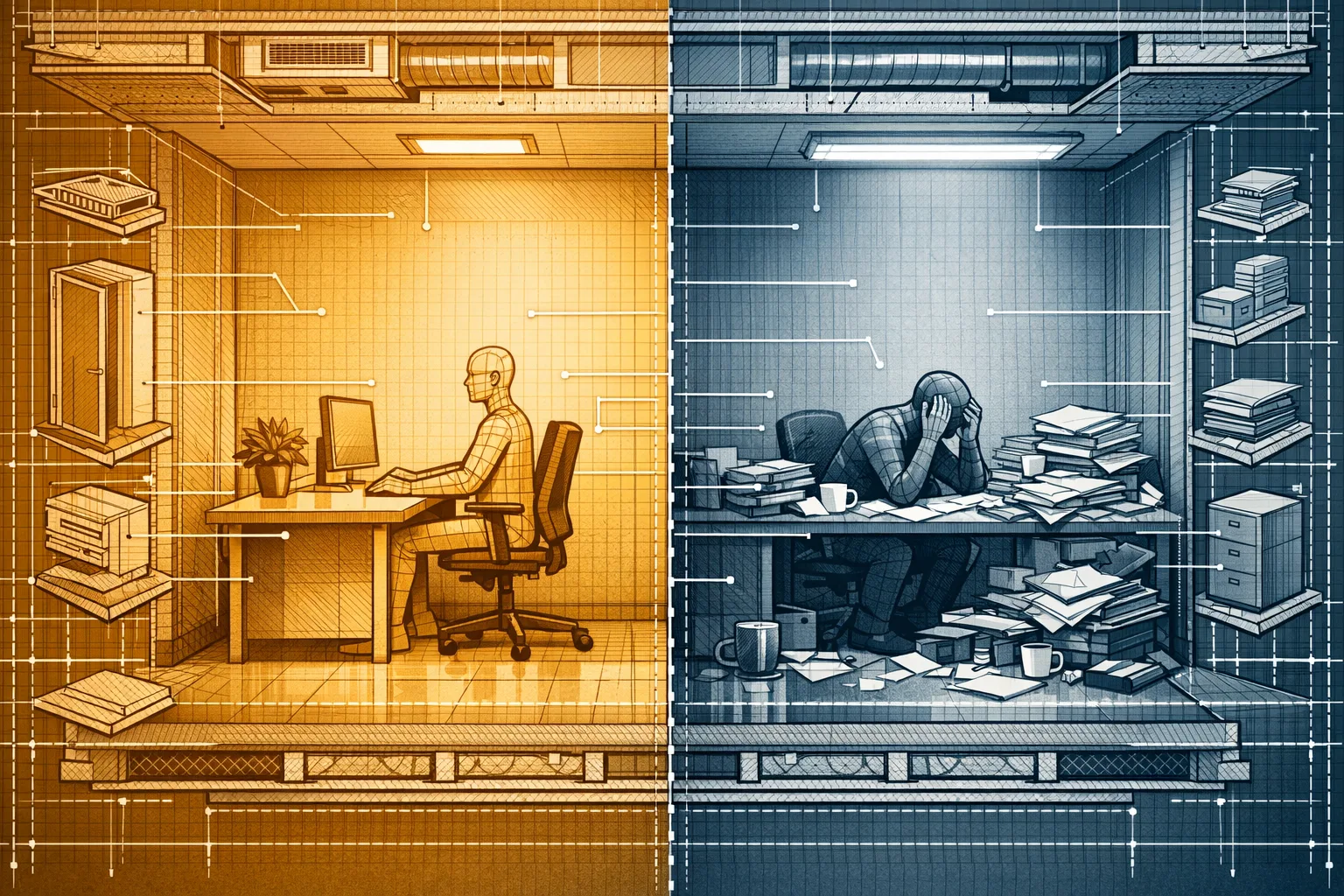

The split was almost exactly half and half. And I think that split tells you everything about how this moment actually works.

What's Actually Happening Out There

Let's start with the data, because it's real and it's bad and I think a lot of business owners are still treating it like background noise.

Anthropic CEO Dario Amodei warned that AI could wipe out half of all entry-level white-collar jobs. Ford CEO Jim Farley said AI would cut in half the number of white-collar jobs in the U.S. These aren't fringe predictions from people who don't know the technology. These are people building and deploying it at scale, saying this out loud to journalists and shareholders.

Then Microsoft AI chief Mustafa Suleyman went further. He said that AI can replace most white-collar work in 12 to 18 months. Twelve to eighteen months. That's not a prediction about the future. That's a prediction about right now.

And just this week, Andrew Yang said "AI is now able to do the work of a very, very smart human in minutes or even seconds," warning that it will displace marketers, coders, designers, lawyers, accountants, and call center workers - joining a chorus of business leaders from Anthropic to Ford.

In January 2025, the U.S. Bureau of Labor Statistics reported the lowest rate of job openings in professional services since 2013 - a 20% year-over-year drop. Meanwhile, the American Staffing Association revealed that approximately 40% of white-collar job seekers in 2024 failed to secure a single interview. Even more telling is Vanguard's finding that hiring for positions paying over $96,000 annually has reached a decade-low level.

That last one hit me differently than the others. Not the layoff counts. Not the CEO quotes. The fact that high-paying professional roles - the ones that were supposed to be safe, the ones that required real skill and education - are just not being filled anymore. Corporate profits are robust, productivity is soaring, and GDP continues to rise. Yet hiring for professional roles in finance, technology, consulting, marketing, and law has slowed dramatically or stopped altogether. Positions once considered foundational to corporate growth - entry-level analysts, junior lawyers, content strategists, HR associates - are vanishing quietly but persistently from the job market.

This is the thing that I keep trying to explain to people who want to dismiss the panic as hype. The companies are not struggling. They're making more money. They just don't need as many people to do it anymore.

The Panic Showed Up Here

About three weeks ago, something changed in the office. I can't point to a single moment. It was more like a weather shift. Derek came in one morning and announced - apparently not joking - that he'd asked an AI to explain his job back to him, step by step, and it had done it perfectly. Derek, who will argue about Star Wars lore with a level of conviction most people reserve for matters of actual consequence, seemed genuinely rattled. That's when I knew it was real.

Tory had already been going through enough. His marriage ended, his car situation is complicated in ways I don't fully understand, and he's been relentlessly upbeat about all of it in the specific way that means he's not fine. When the AI displacement headlines started rolling in harder, he just... leaned in further. Started calling himself "future-proof." Built a whole personal development plan around becoming "irreplaceable." I believe him. I just also think that level of optimism is sometimes a symptom, not a cure.

Stephanie asked me if there was a premium AI tool that could handle her entire role and, when I said parts of it probably could, she shrugged and said she'd just fund something new. That's a specific reaction that requires a specific kind of financial cushion and I will not be taking questions on it.

Linda told me she wasn't worried. She's been doing what she does for long enough that she's watched multiple technological waves come and go. Gerald, apparently, reminded her that when ATMs came out everyone thought bank tellers were done and there are more bank tellers now than in 1970. She told me this with the energy of someone who had workshopped the talking point. I didn't push back, but I also wrote down the teller statistic to check later. (It's true, by the way. The number went up. The nature of the job changed.)

Chris was Chris. If Chris is anxious about anything, he doesn't show it in any way that's legible to me or, I suspect, to anyone else. Chris asked a few smart questions about what kinds of roles were actually disappearing versus what kinds were growing, nodded thoughtfully, and went back to whatever he was doing. This is simultaneously reassuring and slightly maddening.

Here's My Actual Take

I've read enough of the data now to have a position, and my position is this: the panic is not overblown, but the way most people are experiencing it is pointed in the wrong direction.

Everyone is asking "will my job disappear?" The more useful question is "does the way I add value require a human, or does it require tasks?" Those are different questions. In 2026, employees have a more specific anxiety: FOBO, the Fear of Becoming Obsolete. This is different from worrying you'll get laid off. FOBO is the creeping sense that your skills are degrading in real time, that you're falling behind faster than you can catch up, and that the window to stay relevant is closing while you're still trying to figure out what relevant means.

That framing is more honest than anything I've seen from a LinkedIn thought leader in the past year. And it maps exactly onto what I watched happen in our office. The people who were fine weren't fine because they were immune. They were fine because they'd already been asking the right version of the question for long enough that this round of headlines didn't reframe their world for them.

The people who weren't fine were - and I say this with actual sympathy - people whose professional identity had been built on knowing how to do specific tasks rather than knowing how to solve specific problems. That's a meaningful distinction. This fear is particularly acute for younger professionals who are watching entry-level learning opportunities disappear. The grunt work that taught people how to think, how to spot patterns, how to develop judgment is being automated away. That's the thing that actually keeps me up at night when I'm honest about it. Not that the work disappears. That the pathway to getting good enough at the work to matter disappears with it.

I rewrote that last paragraph four times. My dad hasn't read any of this yet.

What the Data Says About Who's Right

Here's the uncomfortable thing: the people who are "fine" and the people who are "not fine" are both, in different ways, responding rationally to real information.

About 60% of 2,500 white collar tech workers believe their jobs and their entire team could be replaced by AI within the next three to five years, but they're still using it at least once per day. This is not denial. This is the correct move. You use the thing that might replace you because right now it makes you better at the thing you do, and being better at the thing you do is the only lever you actually control.

But here's the data the "everything will be fine" camp needs to sit with. Based on a survey of 1,006 global executives in December 2025, AI is behind at least some layoffs, but these are almost completely in anticipation of AI's impact - not because the AI has actually demonstrated it can do the job better yet. Companies are cutting roles in anticipation of capability that doesn't fully exist yet. That is a different and more frightening thing than cutting roles because the capability arrived.

And yet - and this is the part I think the pure-panic crowd underweights - in some instances, AI has had the reverse effect: making workers less productive. A recent study from nonprofit Model Evaluation and Threat Research on AI's impact on software developers found the technology actually made workers' tasks take 20% longer. A study. Published. In 2025. Software developers, the category everyone assumed would go first. Twenty percent slower with AI than without it.

Any returns the economy is seeing are largely confined to the tech industry, suggesting that AI disruption has been limited in the real economy. Recent research from Apollo Global Management's chief economist found that while profit margins in Big Tech increased by more than 20% in the fourth quarter of 2025, the broader Bloomberg 500 Index has seen almost no change.

So which is it? I'll tell you what I think. Both things are true simultaneously, and the mistake is assuming they cancel each other out. The disruption is real and uneven. It is hitting some roles hard right now and other roles barely at all. Research from Stanford suggests the changing dynamics are particularly hard on younger workers, especially in coding and customer support roles. Those aren't random categories. Those are the categories where the work is most legibly task-based, most standardized, most possible to describe to a model in a way it can execute.

If your job requires you to handle genuinely novel problems, build trust with specific humans, or make judgment calls in context that can't be fully specified in advance, you have more runway than the headlines suggest. If your job requires you to do well-defined, repeatable, information-processing tasks - even complex ones - you have less runway than you probably want.

What Running a Small Business Actually Looks Like Right Now

Here is the thing I think about from the business-owner side of this. We've been doing our own version of this reckoning for a while. When we ran our software audit, one of the quiet discoveries was how many tasks we were paying humans to do that tools had already made faster and cheaper. That's not a dramatic finding. That's just operational hygiene. But it made me realize that the AI displacement conversation happening at the Fortune 500 level is, in slower motion, happening at every company that touches software.

More than half of employees - 53% - believe new technology will affect their job security, signaling persistent anxiety as AI adoption accelerates. If you are running a team right now and you haven't talked to your people about this directly, you are managing a team where more than half of them are carrying anxiety you haven't acknowledged. That is an operational problem, not just a culture problem. Anxious people don't take creative risks. They don't raise problems. They protect what they have instead of building what's next.

Employees aren't afraid of the technology. They're afraid of leaders who treat AI transformation like a technology project instead of a fundamental restructuring of how work happens, who demand adoption without providing support, and who use efficiency gains to pile on more work rather than create space for people to adapt.

That is the sentence I wanted to quote directly because I've watched it happen and it's more accurate than anything I could write from scratch. The tools aren't the problem. The framing is the problem. When you tell a team member "we're implementing AI to make you more productive" and what they observe is that their workload doubled and two of their colleagues aren't being replaced when they leave, they do the math themselves. They're not wrong to do the math. And if you want them to actually use the tools well, you need to give them a reason to believe the math leads somewhere other than their own elimination.

I spent a weekend last month building out an AI-assisted outreach sequence for a contact list no one had asked me to process. Ran 847 contacts through in about three hours with a 22% open rate. My dad saw the report on Monday and said "good." That was enough. But I was aware while I was building it that I was automating something that would have taken a junior hire two weeks. I wasn't proud of that part. I'm still not sure what to do with it.

The People Who Are Fine Know Something

Linda is probably right that this is not the first technological shift and won't be the last. She's also probably underestimating how different this one is in character - unlike past automation, which primarily targeted blue-collar work, LLMs are poised to transform higher-wage, highly educated professions across multiple sectors. The ATM analogy breaks down when you're talking about the lawyers and analysts and strategists who were supposed to be the safe ones.

But the people who are handling this well - Chris, mostly, and Tory in his own unhinged way - have something in common. They've stopped treating this as a question about their job and started treating it as a question about their skills. That's not inspirational content. That's a practical reframe. The tools keep evolving. The question of whether you know how to use them, adapt them, and think around their edges does not have an AI solution yet.

A recent article published in the Cureus Journal of Medical Science establishes "AI replacement dysfunction," known as AIRD, and outlines common symptoms that may result from the psychological distress related to AI's impacts across the workforce. We are, apparently, at the point where this is a clinical category. Individuals with AIRD may experience anxiety, insomnia, paranoia, denial of AI's relevance, loss of identity, feelings of worthlessness, resentment and hopelessness. I'm not citing this to be dismissive. I'm citing it because I think it tells you how real and how widespread the psychological impact is - real enough that clinicians needed new vocabulary for it.

The half of the team that's not fine isn't irrational. They're processing something real with the tools they have. The question for people running businesses is whether you're going to help them build better tools for that, or just wait for them to figure it out on their own while the anxiety quietly degrades their output.

Linda said I was doing a good job last Thursday. I've been thinking about whether she meant it ever since. That might tell you everything you need to know about where all of us actually are right now - doing our work, trying to matter, wondering if anyone has noticed, hoping the floor stays solid under our feet a little longer.

It probably will. And then one day it won't. The honest answer is that nobody in this building - not me, not my dad, not Dario Amodei - knows which Tuesday that happens on. What we do know is that the people spending today arguing about whether the panic is warranted are losing time they could spend getting harder to replace.

That's my take. I'm committing to it.